“Because of your work, I can do my life’s work in my lifetime.”

— somebody to Jensen Huang

I am 33 years old. I recently discovered that my still-active torrent account is 17 years old, which means I created it when I was about 16. I am a child of the internet. Back then, if my grandmother picked up the vintage rotary phone, my dial-up connection would drop in an instant and the 54% progress I’d made on a 2.4 MB download at 55 Kb/s would vanish.

Reflecting on that period and the technological progress since, I’m taken aback. We’ve gone from 2am chatter on mIRC and burning CDs filled with games, music, and adult content from DC++ to AI winning gold medals at the IMO, AI we can now run from our laptops on a 700 Mbps+ home network.

What changed?

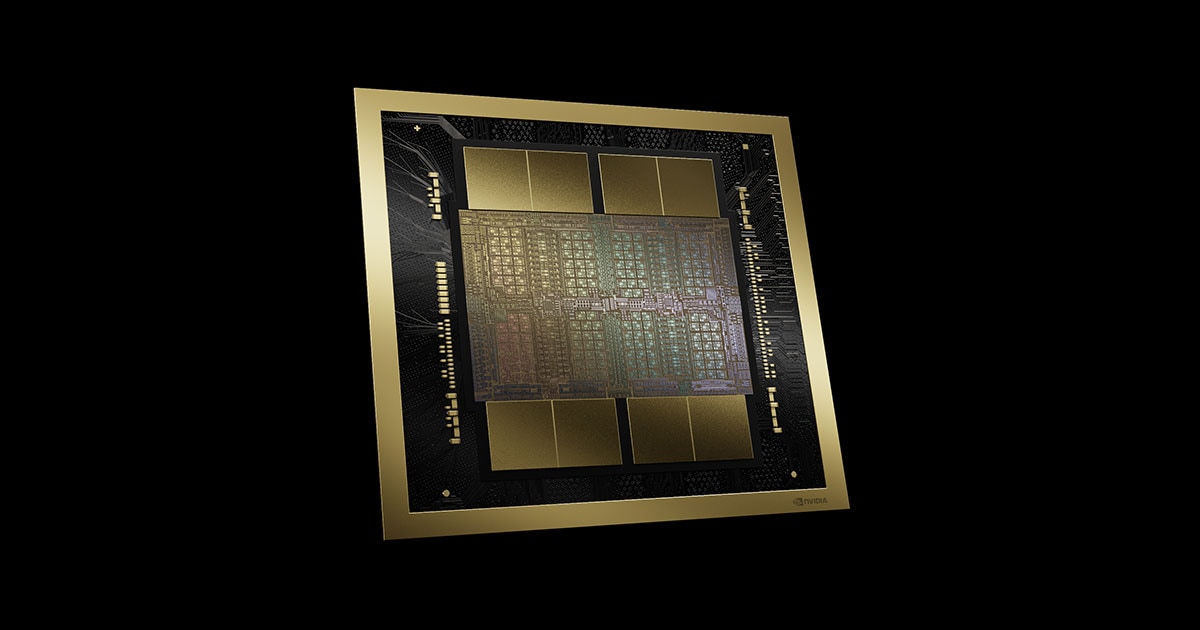

The Silicon Dynasty

While we all know who Jensen Huang is today, he once had humble origins. Contrary to widespread belief, he rose to an emperor’s rank through a rebellion led by those who believed in the silicon dynasty. The rebels fought against the sluggish status quo. Rebels like Alex Krizhevsky. His success planted a seed, the seed of a revolution whose fruits we now reap.

Today we have tinyboxes, DGX Spark, and Mac Mini clusters. Still spicy, but far more accessible than anyone thought possible. The silicon dynasty lets me call Kimi K2 via Groq at 434 tok/s. I can quickly iterate and build armed with nothing but a laptop. If you’re like me, you equate speed with superiority. I don’t mean gpt-4.1-mini being “better” than o3-pro just because it’s faster; I mean Groq’s inference serving equally potent models faster than OpenAI’s. I’m fairly convinced this principle holds broadly. My point is simple: you either ship Kimi K2 at 434 tok/s, or you don’t.

Under these conditions, empirical science thrives. If I can run the same experiment 1E6x in minutes, cleverness becomes a gratuity. I can summon statistics to probe my experimental setup. What did OpenAI and Google learn from solving IMO-level problems in 4.5 hours? Researchers at elite labs are extremely clever, so much goes without saying. But the competition is cutthroat and resource management essential. For example, you don’t invest in custom CUDA kernels unless you’re DeepSeek, choking under the pressure of being too slow.

I need to run as fast as you do, but you have a Bugatti Veyron and I don’t. I can’t slow you down. My only option is to race on a different medium. So I’ll build a janky plane from car scraps and finish the race before you.

Speed is critical.

The Box Contains Everything

I can’t say I cherished Devs unreservedly, but there were few impeccable shots. For instance, this one. Before you continue reading, go watch that clip.

If computable numbers can be represented by Turing machines, mathematics is the language with which God has written the universe, and the dynamics of nature are computable\(^*\), then a computer can master that language. In its most startling form, we call it a sim. According to smart ones, it goes like this: every physically realizable process is Turing-computable. This is the physical Church-Turing thesis. In principle, a tinybox could contain everything.

Depending who you’re asking, an LLM is a sim. It uses language as a proxy for simulation exercises. Furthermore, verbal reasoning is our civilization’s oldest compression scheme for thought. Across centuries, we’ve distilled complex chains of logic, intuition, and abstraction into shared textual formats. It’s error-prone but it’s also the only medium that’s scaled collective reasoning across billions of minds and thousands of years. Moreover, according to Vann McGee, it’s decidable:

“Recursion theory is concerned with problems that can be solved by following a rule or a system of rules. Linguistic conventions, in particular, are rules for the use of a language, and so human language is the sort of rule-governed behavior to which recursion theory applies. Thus, if, as seems likely, an English-speaking child learns a system of rules that enable her to tell which strings of English words are English sentences, then the set of English sentences has to be a decidable set. This observation puts nontrivial constraints upon what the grammar of a natural language can look like. As Wittgenstein never tired of pointing out, when we learn the meaning of a word, we learn how to use the word. That is, we learn a rule that governs the word’s use.”

Thus, it’s not too far fetched to conceive human reasoning can be simulated. To some degree, the cloud tinyboxes contain it already.

\(^*\) There are classical results showing that computable initial data for the wave equation can evolve into non-computable states (Pour-El & Richards, 1981), with a stronger “nowhere-computable” version due to Pour-El & Zhong (1997).